Taken from Intel Performance Libraries, Intel® MPI Library Over Libfabric*

What is Libfabric?

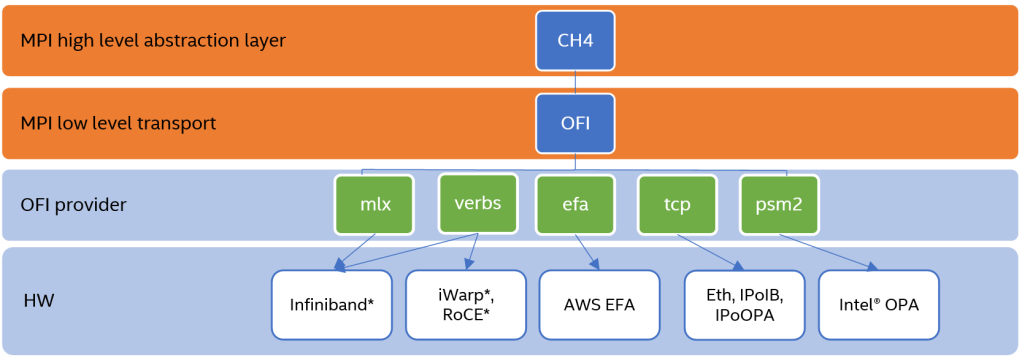

Libfabric is a low-level communication abstraction for high-performance networks. It hides most transport and hardware implementation details from middleware and applications to provide high-performance portability between diverse fabrics.

Using the Intel MPI Library Distribution of Libfabric

By default, mpivars.sh sets the environment to the version of libfabric shipped with the Intel MPI Library. To disable this, use the I_MPI_OFI_LIBRARY_INTERNAL environment variable or -ofi_internal (by default ofi_internal=1)

# source /usr/local/intel/2018u3/impi/2018.3.222/bin64/mpivars.sh -ofi_internal=1

# I_MPI_DEBUG=4 mpirun -n 1 IMB-MPI1 barrier[0] MPI startup(): libfabric version: 1.7.2a-impi

[0] MPI startup(): libfabric provider: verbs;ofi_rxm

[0] MPI startup(): Rank Pid Node name Pin cpu

[0] MPI startup(): 0 130358 hpc-n1 {0,1,2,3,4,5,6,7,8,9,10,11,12,13,14,15,16,17,18,19,20,21,22,23,24,25,26,27,28,29,

30,31}

#------------------------------------------------------------

# Intel(R) MPI Benchmarks 2019 Update 4, MPI-1 part

#------------------------------------------------------------

# Date : Thu May 20 12:57:03 2021

# Machine : x86_64

# System : Linux

# Release : 3.10.0-693.el7.x86_64

# Version : #1 SMP Tue Aug 22 21:09:27 UTC 2017

# MPI Version : 3.1

# MPI Thread Environment:

# Calling sequence was:

# IMB-MPI1 barrier

# Minimum message length in bytes: 0

# Maximum message length in bytes: 4194304

#

# MPI_Datatype : MPI_BYTE

# MPI_Datatype for reductions : MPI_FLOAT

# MPI_Op : MPI_SUM

#

#

# List of Benchmarks to run:

# Barrier

#---------------------------------------------------

# Benchmarking Barrier

# #processes = 1

#---------------------------------------------------

#repetitions t_min[usec] t_max[usec] t_avg[usec]

1000 0.08 0.08 0.08

# All processes entering MPI_Finalize

Changing the -ofi_internal=0

# source /usr/local/intel/2018u3/impi/2018.3.222/bin64/mpivars.sh -ofi_internal=0

# I_MPI_DEBUG=4 mpirun -n 1 IMB-MPI1 barrier[0] MPI startup(): libfabric version: 1.1.0-impi

[0] MPI startup(): libfabric provider: mlx

.....

.....Common OFI Controls

To select the OFI provider from the libfabric library, you can use definte the name of the OFI Provider to load

export I_MPI_OFI_PROVIDER=tcpLogging Interfaces

FI_LOG_LEVEL=<level> controls the amount of logging data that is output. The following log levels are defined:

- Warn: Warn is the least verbose setting and is intended for reporting errors or warnings.

- Trace: Trace is more verbose and is meant to include non-detailed output helpful for tracing program execution.

- Info: Info is high traffic and meant for detailed output.

- Debug: Debug is high traffic and is likely to impact application performance. Debug output is only available if the library has been compiled with debugging enabled.

References: