Do take a look at the AMD-AOCC User Guide if you wish to compile C/C++ and Fortran on AMD Platform. You may want to take a look at AMD Zen Software Studio which includes AMD Compilers, Libraries and profiling tools.

AMD

AMD’s 96 Core Epyc Genoa CPU faster than Intel’s Sapphire Rapid

Taken from the article “AMD’s 96 Core Epyc Genoa CPU is Over 70% Faster than Intel’s Xeon Sapphire Rapids Flagship in 2S Mode”

Linux kernel Compilation

….The dual Epyc 9554 (64 cores per socket) is 25-30% faster than the top Intel combo, while the Dual 9654 (96 cores per socket) is over 70% faster than the Sapphire Rapids-SP flagship….Kernel-based Virtual Machine (KVM)

…..64-core Epyc 9554 pair is 25% faster than the latter makes it hard to defend the Intel offering. The Xeon Platinum 8490H mostly competes with the last-gen Milan and Milan-X flagships…..MariaDB and Nginx

AMD’s 96 Core Epyc Genoa CPU is Over 70% Faster than Intel’s Xeon Sapphire Rapids Flagship in 2S Mode

…..The Genoa parts are faster, with a lead of 10% to 40%, while the 64-core Milan-X deals with Sapphire Rapids….

AMD is set to launch new HPC Products on 8th November

The leading semiconductor manufacturer AMD’S Milan-X EPYC series processors could be expected at this conference. Judging from the latest news, this series of processors uses unique 3D V-cache technology (3D caches act as a rapid refresher, it uses a novel new hybrid bonding technique) even before the Vermeer-X consumer product line. The line is based on the Zen3 micro-architecture. We always put AMD vs. Intel in a clash to witness who is better but always ends up with neutral ideas; both AMD and Intel are relentlessly attempting to prove their side is in the better format. Whilst AMD is expected to launch its first machine based on (MCM) (Multi-Chip Module Design), even earlier than NVIDIA GH100 (Hopper) and Intel’s Ponte Vecchio (Xe-HPC).

AMD is set to launch new HPC products on November 8 at the “Accelerated Data Center Premier”

AMD strong comeback

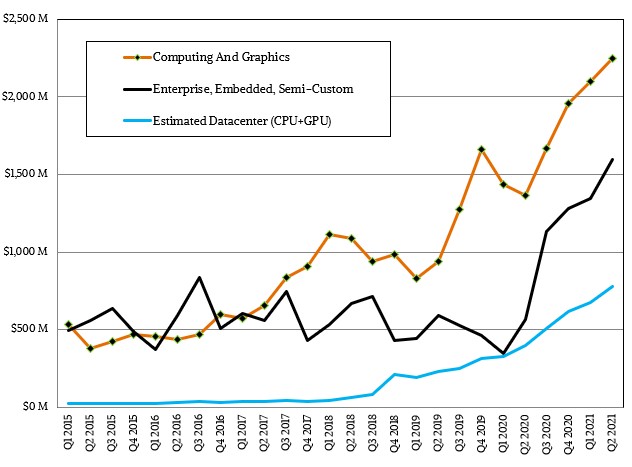

In the article from the next platform “AMD is finally trusted in the Datacentre again“

AMD turned in the best quarter that we can remember, and is now firmly in place as the gadfly counterbalance to the former hegemony of Intel. And that is good for everyone who buys a game console, a PC, an edge device, and a server. And the game is only going to get more interesting with Intel getting its chip together and preparing for a long battle with AMD and other XPU usurpers in chip design and as well as Taiwan Semiconductor Manufacturing Corp in chip etching and packaging.

We do get some hints, however. Lisa Su, AMD’s president and chief executive officer, said that AMD’s datacenter business – at this point meaning Epyc CPUs and Instinct GPU accelerators – comprised more than 20 percent of the company’s overall sales, and the big driver in this quarter was not just second generation “Rome” Epyc 7002 and third generation “Milan” Epyc 7003 server chips – Rome is still outselling Milan, but the crossover is coming in the third quarter of this year – but the Radeon Instinct M100 GPU accelerators launched last fall. The datacenter GPU business more than doubled from a year ago, according to Su, and AMD expects it to continue to grow in the second half of the year as the 1.5 exaflops “Frontier” supercomputer at Oak Ridge National Laboratory in the United States, the as-yet-unnamed pre-exascale system at Pawsey Supercomputing Center in Australia, and the Lumi pre-exascale system in Finland all get their Radeon Instinct motors installed.

the Next Platform “AMD is finally trusted in the Datacentre again”

AMD HPC User Forum Networking Meeting at ISC21

AMD HPC User Forum Networking Meeting

For more information, see AMD HPC User Forum Networking Meeting

Wednesday, June 30, 2021

7:00am – 8:15am (PDT)

10:00am – 11:15pm (EDT)

4:00pm – 5:15pm (CEST)

To Register Live at ISC21: AMD HPC User Forum Networking Meeting – Registration (eventscloud.com)

- 7:00 – 7:15 am: Opening Remarks

- Mike Norman, PhD, Director, SDSC

- Brad McCredie, PhD, Corporate Vice President, AMD

- 7:15 – 7:20 am: Introduction of Forum

- Mary Thomas, PhD, AMD User Forum President, Computational Data Scientist, SDSC

- Mary Thomas, PhD, AMD User Forum President, Computational Data Scientist, SDSC

- 7:20 – 7:50 am: Forum Members discuss their work and value of Forum

- Mahidhar Tatineni, PhD, SDSC, (User Forum Special Interest Group)

- Alastair Basden, PhD, HPC Technical Manager, Durham University

- Lorna Smith, Programme Manager, EPCC, University of Edinburgh

- Sagar Dolas, Program Lead – Future Computing & Networking, Surf

- Hatem Ltaief, PhD, Principal Research Scientist, KAUST

- Marc O’Brien, Cancer Research UK Cancer Institute, Cambridge University

- 7:50 – 8:00 am : Q/A

Great Tools for AMD EPYC

- AMD EPYC™ Processor Selector Tool with Kit Configurator

Compare your current CPU with AMD EPYC™ CPUs on price, cores, and performance, then build out your ideal server. - AMD EPYC™ Server Virtualization TCO Estimation Tool

See the potential value AMD EPYC™ CPUs may deliver for your datacenter. Input your VM requirements and environment factors like power, real estate cost, select your virtualization license, and more. Compare your current x86 based server solution to a solution powered by AMD EPYC™ processors. - AMD EPYC™ Bare Metal TCO Estimation Tool

Discover the potential value that AMD EPYC™ CPUs can deliver for your bare metal server environment. Compare by server count, performance, or total budget. Then select your filter, your processor comparisons, and system memory requirements. Choose 3, 4, or 5 year time frames for your AMD EPYC™ Bare Metal TCO estimation. - AMD Cloud Cost Advisor

Discover the potential value AMD EPYC™ CPUs bring to the cloud with the latest cost analysis tool. AMD Cloud Cost Advisor helps with real-time insights into estimated cost savings when switching to cloud instances powered by AMD within the same cloud service provider.

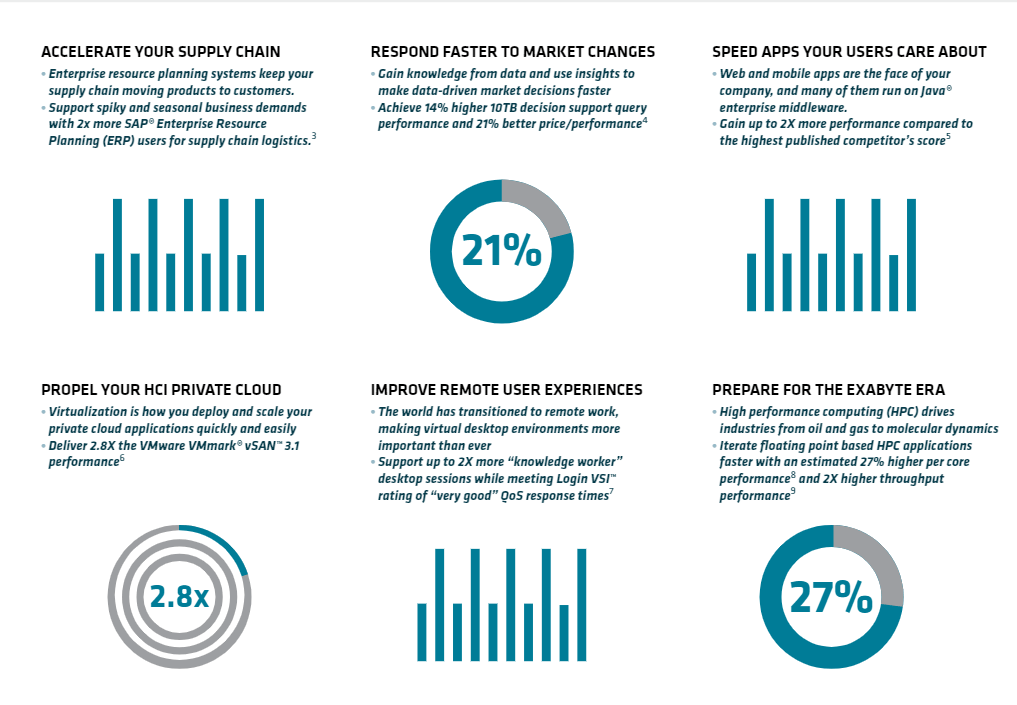

Product Brief with AMD EPYC 7003

3rd Gen AMD EPYC™ processors raise the bar once more for workload performance, with up to 19% more instructions per clock (IPC)1. No matter the job, you can drive faster time to results, provide more and better data for decisions, and achieve better business outcomes. With our leadership approach, the world’s highest performance server CPU, AMD EPYC 7763,2 and AMD Infinity Architecture deliver innovation —up to 32MB of L3 cache per core, synchronized fabric and memory clock speeds designed for improved performance, plus hardware and virtual security features to help safeguard your business—right out of the box

AMD EPYC Product Brief (Technical | In-depth details about your new AMD EPYC 7003 Series Processers (pathfactory.com))

References:

Introducing 3rd Gen AMD Processors for the Modern Data Centre

Join CEO Dr. Lisa Su, CTO Mark Papermaster, Senior VP and GM of Datacenter and Embedded Solutions Business Group, Forrest Norrod, Senior VP and GM of Server Business Unit, Dan McNamara, and appearances by industry-leading data center strategic partners and customers in this digital launch of the 3rd Gen AMD EPYC™ Processors.

Chapters:

00:00 – Intro

01:00 – Introducing 3rd Gen AMD EPYC

07:48 – “Zen 3” Architecture for Data Center

15:24 – 3rd Gen AMD EPYC Portfolio & Performance:

20:44 – HPC Performance Leadership & Exascale Computing

25:44 – Powering the Most Important Cloud Services

35:54 – Accelerating Enterprise Workloads

40:42 – AMD EPYC Solution Ecosystem

49:22 – Conclusion

AMD unveils new EPYC processor for high performance computing

AMD has today launched a new EPYC processor designed for the data center industry, cloud, and enterprise customers.

The AMD EPYC 7003 Series central processing units (CPUs) include up to 64 Zen 3 cores per processor, and also include the EPYC 7763 server processor for a performance and per-core cache memory boost. The 7003 series also includes PCIe 4 connectivity and eight memory channels per processor.

Security features include AMD Infinity Guard and a new feature called Secure Encrypted Virtualization-Secure Nested Paging (SEV-SNP). This adds memory integrity protection capabilities to create an isolated execution environment. This can help to prevent hypervisor-based attacks.

According to AMD, cloud providers can leverage the 7003 Series’ high core density, security features, and improved integer performance.

Further, high performance computing (HPC) customers can leverage the 7003 series’ faster time to recovery due to more I/O and memory throughput, and the Zen 3 cores.

For the full article, do take a look at https://itbrief.co.nz/story/amd-unveils-new-epyc-processor-for-high-performance-computing

IntelMPI Application Tuning for AMD EPYC

If you wish to Intel MPI on AMD EPYC Servers, you have to change your MPI

-genv I_MPI_DEBUG=5 -genv I_MPI_PIN=1 -genv KMP_AFFINITY verbose,granularity=fine,compact

Explnation of Options:

-genv I_MPI_DEBUG=5

(Enable debug output to print transport and pinning information)

-genv I_MPI_PIN=1

(Enables Rank Pining. Use in conjunction with the previous options)

-genv KMP_AFFINITY verbose,granularity=fine,compact

( For more information, Thread Affinity Interface (Linux* and Windows*) )

References: