Taken from Installing Nvidia DOCA OFED. Do read the documentation for more information. Other relevan documentation will include

Quick Reference

Installation Profiles

| DOCA-Host Profile | Description |

|---|---|

| doca-ofed | Allows you to install the same drivers and tools of MLNX_OFED using the DOCA-Host package, but without other DOCA functionality. |

| doca-network | Intended for users who want to use only the networking functionality of the DOCA-Host package. |

| doca-all | Intended for users who want to use the full extent of DOCA drivers and libraries, the full DOCA-Host installation. |

# Remove the installed DOCA OFED software from the host.

for f in $(rpm -qa | grep -i doca ) ; do sudo yum -y remove $f; done

# Remove the installed MLNC_OFED software.

sudo /usr/sbin/ofed_uninstall.sh --force

sudo dnf autoremove

sudo dnf clean all -y

sudo dnf makecache -yDownload and Install NVidia RPM GPG Key

sudo wget http://www.mellanox.com/downloads/ofed/RPM-GPG-KEY-Mellanox-SHA256sudo rpm --import RPM-GPG-KEY-Mellanox-SHA256

DOCA-OFED

At /etc/yum.repos.d/

touch /etc/yum.repos.d/doca.repoInside /etc/yum.repos.d/doca.repo, include the information

[doca]

name=DOCA Online Repo

baseurl=https://linux.mellanox.com/public/repo/doca/3.2.1/rhel8/x86_64/

enabled=1

gpgcheck=0Save and Exit

Install DOCA-OFED

dnf install -y doca-ofedValidating that OFED and ROCEV2 are working

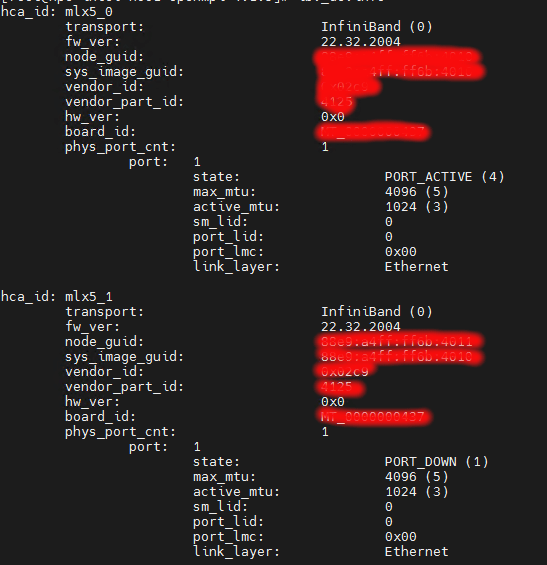

One of the fastest commands is to use ibstat

CA 'mlx5_0'

CA type: MT4127

Number of ports: 1

Firmware version: 26.43.2026

Hardware version: 0

Node GUID: 0x5000e6030073b514

System image GUID: 0x5000e6030073b514

Port 1:

State: Down

Physical state: Disabled

Rate: 40

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x.....

Port GUID: 0x......

Link layer: Ethernet

CA 'mlx5_1'

CA type: MT4127

Number of ports: 1

Firmware version: 26.43.2026

Hardware version: 0

Node GUID: 0x.....

System image GUID: 0x.....

Port 1:

State: Active

Physical state: LinkUp

Rate: 25

Base lid: 0

LMC: 0

SM lid: 0

Capability mask: 0x.......

Port GUID: 0x.....

Link layer: Ethernet

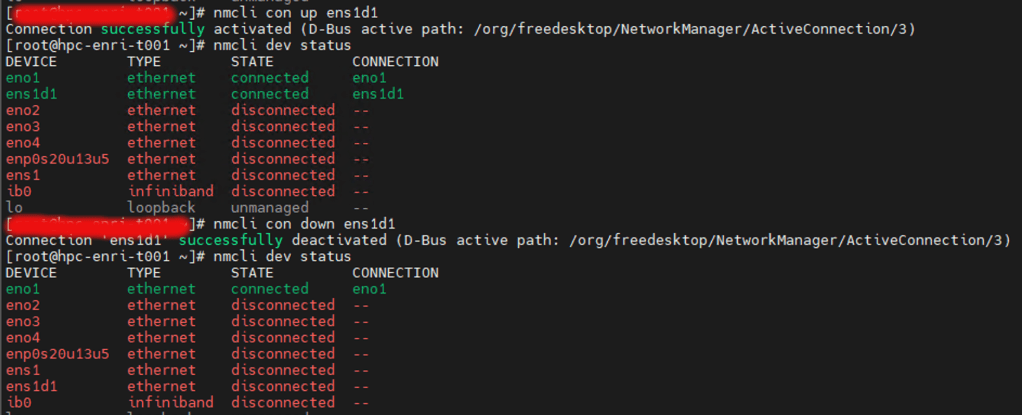

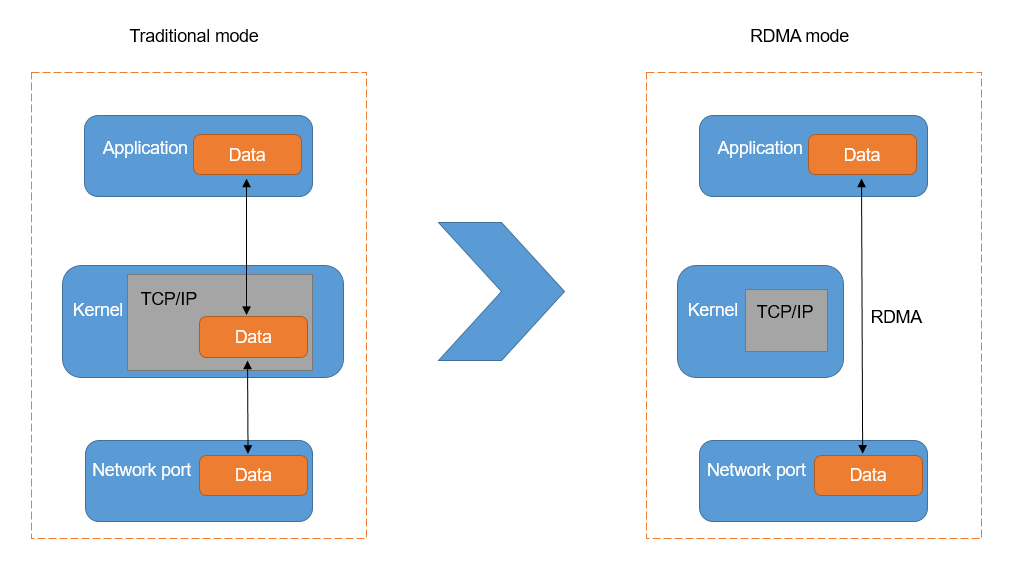

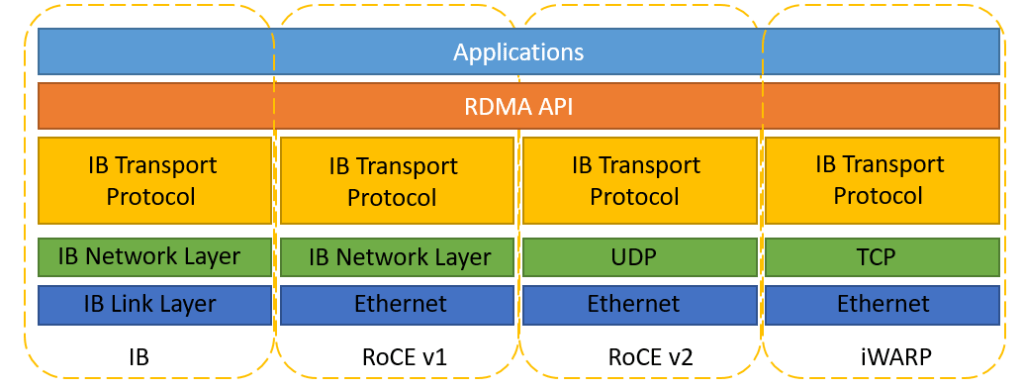

You can use the following information to check further. Installing RoCE using Mellanox (Nvidia) OFED package