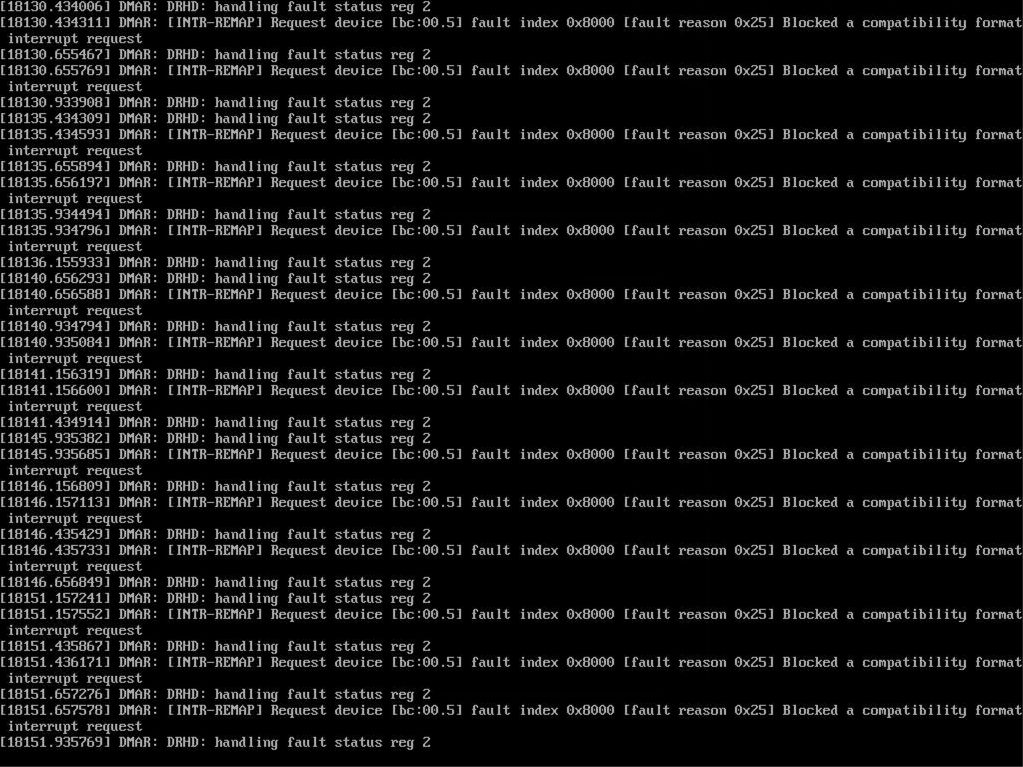

If you are facing an issue similar to this error and the reasons provided are:

- Host is unavailable. Please check that all hosts are available.

- Cannot launch hydra_bstrap_proxy or it crashed on one of the hosts. Make sure hydra_bstrap_proxy is available on all hosts and it has right permissions.

- Firewall refused connection. Check that enough ports are allowed in the firewall and specify them with the I_MPI_PORT_RANGE variable.

- pbs bootstrap cannot launch processes on remote host. You may try using -bootstrap option to select alternative launcher.

[mpiexec@hpc-node1] check_exit_codes (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:117): unable to run bstrap_proxy on hpc-npriv-g001 (pid 2778558, exit code 256)

[mpiexec@hpc-node1] poll_for_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:159): check exit codes error

[mpiexec@hpc-node1] HYD_dmx_poll_wait_for_proxy_event (../../../../../src/pm/i_hydra/libhydra/demux/hydra_demux_poll.c:212): poll for event error

[mpiexec@hpc-node1] HYD_bstrap_setup (../../../../../src/pm/i_hydra/libhydra/bstrap/src/intel/i_hydra_bstrap.c:1065): error waiting for event

[mpiexec@hpc-node1] HYD_print_bstrap_setup_error_message (../../../../../src/pm/i_hydra/mpiexec/intel/i_mpiexec.c:1027): error setting up the bootstrap proxies

[mpiexec@hpc-node1] Possible reasons:

[mpiexec@hpc-node1] 1. Host is unavailable. Please check that all hosts are available.

[mpiexec@hpc-node1] 2. Cannot launch hydra_bstrap_proxy or it crashed on one of the hosts. Make sure hydra_bstrap_proxy is available on all hosts and it has right permissions.

[mpiexec@hpc-node1] 3. Firewall refused connection. Check that enough ports are allowed in the firewall and specify them with the I_MPI_PORT_RANGE variable.

[mpiexec@hpc-node1] 4. pbs bootstrap cannot launch processes on remote host. You may try using -bootstrap option to select alternative launcher.The Solution is found by modifying your mpiexec commands

$ mpiexec -bootstrap ssh ......For example

$ mpiexec -bootstrap ssh python3 python.textAlternatively, you can put the line in your .bashrc or PBS Script

export I_MPI_HYDRA_BOOTSTRAP=sshReferences: