Pure Storage

Portworx Lightboard Sessions: Deploying Portworx on Kubernetes

Portworx Lightboard Sessions: Deployment Modes (Hyperconverged, Disaggregated)

In this short video you will learn the two main deployment modes in which Portworx can be installed on your infrastructure.

Portworx Lightboard Sessions: Understanding Storage Pools

In this short video, learn how Portworx clusters infrastructure together into classified storage resource pools for applications.

Portworx Lightboard Sessions: Portworx 101 (Overview)

Learn the basics of Portworx and how it can enable your stateful workloads. This video will discuss the largest fragments of the Portworx platform and how it creates a global namespace to enable virtual volumes for containers.

Portworx Lightboard Sessions: Why choose Portworx?

Portworx is the leading container data management solution for Kubernentes. This short video will explain the Portworx value proposition along with some of the differentiating features such as data mobility, application awareness and infrastructure independence.

RapidFile Toolkit v2.0 for FlashBlade

What is RapidFile Toolkit?

RapidFile Toolkit is a set of supercharged tools for efficiently managing millions of files using familiar Linux command line interfaces. RapidFile Toolkit is designed from the ground up to take advantage of Pure Storage FlashBlade’s massively parallel, scale-out architecture, while also supporting standard Linux file systems. RapidFile Toolkit can serve as a high performance, drop-in replacement for Linux commands in many common scenarios, which can increase employee efficiency, application performance, and business productivity. RapidFile Toolkit is available to all Pure Storage customers.

RapidFile

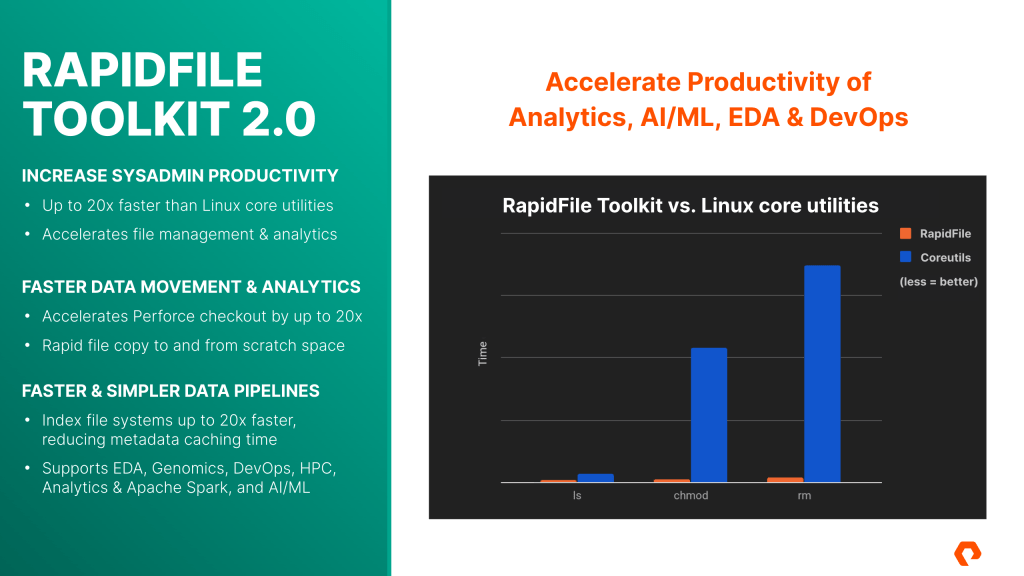

Benefits of RapidToolkit according to the Site

Increase SysAdmin Productivity

- Up to 20X faster than Linux Core Utilities

- Accelerates file management and analytics

Faster Data Movement & Analytics

- Accelerates Perforce Checkout by up to 20X

- Rapid file copy to and from scratch space

Faster & Simpler Data Pipelines

- Indexing files systems up to 20X faster, reducing metadata caching time

- Support EDA, Genomics, DevOps, HPC, Analytics & Apache Spark and AI/ML

Commands

| Linux commands | RapidFile Toolkit v2.0 | Description |

|---|---|---|

| ls | pls | Lists files & directories |

| find | pfind | Finds matching files |

| du | pdu | Summarizes file space usage |

| rm | prm | Removes files & directories |

| chown | pchown | Changes file ownership |

| chmod | pchmod | Changes file permissions |

| cp | pcopy | Copies files & directories |

To Download, you have to be Pure Storage Customers and Partners.

Download URL (login required):

Configuring NVMeoF RoCE For SUSE 15

The blog is taken from Configuring NVMeoF RoCE For SUSE 15.

The purpose of this blog post is to provide the steps required to implement NVMe-oF using RDMA over Converged Ethernet (RoCE) for SUSE Enterprise Linux (SLES) 15 and subsequent releases.

The blog is taken from Configuring NVMeoF RoCE For SUSE 15.

An important item to note is that RoCE requires a lossless network, requiring global pause flow control or PFC to be configured on the network for smooth operation.

All of the below steps are implemented using Mellanox Connect-X4 adapters.

Session Trunking for NFS available in RHEL-8

This Article is taken from Is NFS session trunking available in RHEL?

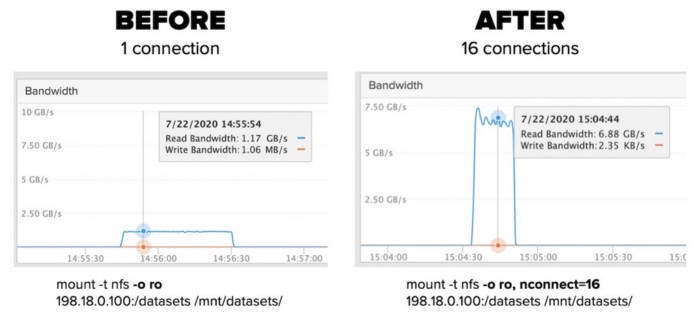

Session trunking, whereby one can have multiple TCP connections to the same NFS server with the same IP address is provided by nconnect. This feature is available in RHEL 8

RHSA-2020:4431 for the package(s) kernel-4.18.0-240.el8 or later.

RHBA-2020:4530 for the package(s) nfs-utils-2.3.3-35.el8 libnfsidmap-2.3.3-35.el8 or later.

You can configure on the client side up to 16 connection

[root@nfs-client ~]# mount -t nfs 192.168.0.2:/nfs-share /nfs-share -o nosharecache,nconnect=16You can see by using the command

[root@nfs-client ~]# cat /proc/self/mountstats

......

......

RPC iostats version: 1.1 p/v: 100003/4 (nfs)

xprt: tcp 991 0 2 0 39 13 13 0 13 0 2 0 0

xprt: tcp 798 0 2 0 39 6 6 0 6 0 2 0 0

xprt: tcp 768 0 2 0 39 6 6 0 6 0 2 0 0

xprt: tcp 1013 0 2 0 39 4 4 0 4 0 2 0 0

xprt: tcp 828 0 2 0 39 4 4 0 4 0 2 0 0

xprt: tcp 702 0 2 0 39 2 2 0 2 0 2 0 0

xprt: tcp 783 0 2 0 39 2 2 0 2 0 2 0 0

xprt: tcp 858 0 2 0 39 2 2 0 2 0 2 0 0

.....Someone recorded multiple performance increase when used on Pure Storage acting as a NFS Server. at Use nconnect to effortlessly increase NFS performance

References:

Rapidfile Toolskit 1.0

RapidFile Toolkit 1.0 (formerly, PureTools) provides fast client-side alternatives for common Linux commands like ls, du, find, chown, chmod, rm and cp which has been optimized for the high level of concurrency supported by FlashBlade NFS. You will be

For CentOS/RHEL

# sudo rpm -U rapidfile-1.0.0-beta.5/rapidfile-1.0.0-beta.5-Linux.rpm

Examples:

Disk Usages:

% pdu -sh /scratch/user1

Copy Files:

% pcp -r -p -u /scratch/user1/ /backup/user1/

Remove Files:

% prm -rv /scratch/user1/

Change Ownership:

% pchown -Rv user1:usergroup /scratch/user1

Change Permission:

% pchmod -Rv 755 /scratch/user1

References: