If you are using Active Directory, you may only have uid for ownership to locate the directory, the command to use will be

cd /home

find . -maxdepth 1 -user 130456785323

lostuserIf you are using Active Directory, you may only have uid for ownership to locate the directory, the command to use will be

cd /home

find . -maxdepth 1 -user 130456785323

lostuserIf you are writing a script that involve cat and if you wish to leave a line after “cat”, do the following

cat /usr/local/lic_lmstat_log/abaqus_lmstat.log ; echo.....

.....

Users of tfluid_int_ccmp: (Total of 128 licenses issued; Total of 0 licenses in use)

Users of tfluid_int_fluent: (Total of 128 licenses issued; Total of 0 licenses in use)

[user1@node1 ~]$

If you need to change the timestamp recursively, you may want to use the command. Change to the directory where you want to change.

$ cd ToBeChangedDir

$ find * -exec touch -t 202403011000 {} \;Finally change the root directory itself

$ touch -t 202403011000 ToBeChangedDirI had a casual read on the book “Bash Idioms” by Carl Albing. I scribbled what I learned from what stuck me the most. There are so much more. Please read the book instead.

Lesson 1: “A And B” are true only if both A and B are true…..

Example 1: If the cd command succeeds, then execute the “rm -Rv *.tmp” command

cd tmp && rm -Rv *.tmpLesson 2: If “A is true”, “B is not executed” and vice versa.

Example 2: Change Directory, if fail, put out the message that the change directory failed and exit

cd /tmp || { echo "cd to /tmp failed" ; exit ; }Lesson 3: When do we use the [ ] versus [[ ]]?

I learned that the author advises BASH users to use the [[ ]] unless when avoidable. The Double Bracket helps to avoid confusing edge case behaviours that a single bracket may exhibit. If however, the main goal is portability across various platform to non-bash platforms, single quota may be advisable.

You may use the command “telinit” to change the SysV system runlevel without the need to reboot. You will need root access to run the command

Use 1: Single User Mode

# telinit SUse 2: To go to Graphical.Target

# telinit 5Use 3: To go to Multi-User.Target

# telinit 3Use 4: To Reload daemon configuration. This is equivalent to systemctl daemon-reload.

# telinit qTo verify, you can use the command systemctl get-default

# systemctl get-default

graphical.targetAlias are very useful tools to create shorthand pseudonyms to run the command you want without typing the whole thing.

Display all alias names

Typing alias the whole command will

$ alias

alias cp='cp -i'

alias egrep='egrep --color=auto'

alias fgrep='fgrep --color=auto'

alias grep='grep --color=auto'

alias l.='ls -d .* --color=auto'

alias ll='ls -l --color=auto'

alias ls='ls --color=auto'

alias mv='mv -i'

alias rm='rm -i'

alias xzegrep='xzegrep --color=auto'

alias xzfgrep='xzfgrep --color=auto'

alias xzgrep='xzgrep --color=auto'

alias zegrep='zegrep --color=auto'

alias zfgrep='zfgrep --color=auto'

alias zgrep='zgrep --color=auto'

Useful Alias to consider

alias ls='ls --color=auto'

alias egrep='egrep --color=auto'

alias fgrep='fgrep --color=auto'

alias grep='grep --color=auto'

alias mv='mv -i'

alias rm='rm -i'

alias cp='cp -i'Remove alias

Just use the command unalias

unalias ll Point 1: View all the saved connections

# nmcli connection show

ens1f0 XXXX-XXXX-XXXX-XXXX-XXXX ethernet ens1f0

ens1f1 YYYY-YYYY-YYYY-YYYY-YYYY ethernet ens1f1

ens10f0 ZZZZ-ZZZZ-ZZZZ-ZZZZ-ZZZZ ethernet --

ens10f1 AAAA-AAAA-AAAA-AAAA-AAAA ethernet --

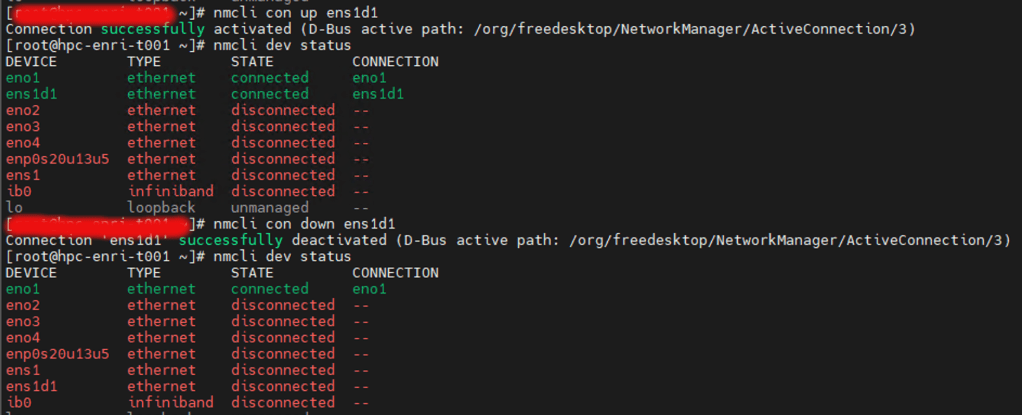

Point 2a: Stop Network

You can use the command “nmcli connection down ssid/uuid". For example

# nmcli connection down XXXX-XXXX-XXXX-XXXX-XXXX

Connection 'eno0' successfully deactivated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/3)Point 2b: Start Network

You can use the command “nmcli connection up ssid/uuid". For example

# nmcli connection up XXXX-XXXX-XXXX-XXXX-XXXX

Connection 'eno0' successfully activated (D-Bus active path: /org/freedesktop/NetworkManager/ActiveConnection/3)Point 3: Device Connection

To check the Device status

# nmcli dev status

ens1f0 ethernet connected ens1f0

eno1f1 ethernet connected ens1f1

eno10f0 ethernet disconnected --

eno10f1 ethernet disconnected --

Point 4: List all Device

# nmcli device show

GENERAL.DEVICE: ens1f0

GENERAL.TYPE: ethernet

GENERAL.HWADDR: XX:XX:XX:XX:XX:XX

GENERAL.MTU: 1500

GENERAL.STATE: 100 (connected)

GENERAL.CONNECTION: ens1f0

GENERAL.CON-PATH: /org/freedesktop/NetworkManager/ActiveConnection/2

WIRED-PROPERTIES.CARRIER: on

IP4.ADDRESS[1]: 192.168.0.1

IP4.GATEWAY: 192.168.0.254

IP4.ROUTE[1]: dst = 0.0.0.0/0, nh = 192.168.0.254, mt = 101

IP4.ROUTE[2]: dst = 198.168.0.0/19, nh = 0.0.0.0, mt = 101

IP6.ADDRESS[1]: xxxx::xxxx:xxxx:xxxx:xxxx/64

IP6.GATEWAY: --

IP6.ROUTE[1]: dst = fe80::/64, nh = ::, mt = 1024

GENERAL.DEVICE: eno1f1

GENERAL.TYPE: ethernet

GENERAL.HWADDR: 94:6D:AE:9B:76:1C

GENERAL.MTU: 1500

GENERAL.STATE: 100 (connected)

GENERAL.CONNECTION: eno1f1

GENERAL.CON-PATH: /org/freedesktop/NetworkManager/ActiveConnection/4

WIRED-PROPERTIES.CARRIER: on

IP4.ADDRESS[1]: 192.168.200.201/19

IP4.GATEWAY: --

IP4.ROUTE[1]: dst = 192.168.192.0/19, nh = 0.0.0.0, mt = 102

IP6.ADDRESS[1]: fe80::966d:aeff:fe9b:761c/64

IP6.GATEWAY: --

IP6.ROUTE[1]: dst = fe80::/64, nh = ::, mt = 1024

Point 5: Start and Stop Device

# nmcli con down ens1d1

# nmcli con up ens1d1

References:

Basic Use of FIND

If you are looking to find a file, one of the most common tools is Find. Here is a recap.

| O | FILE TYPE | DESCRIPTION |

|---|---|---|

| 1 | type -f | Limits search results to files only |

| 2 | type -d | Limits search results to directories only |

| 3 | type -l | Limits search results to symbolic links only |

For example, search for a case-insensitive file named “hello.mov”

$ find $HOME -type -iname "Hello.mov"Parameters

| NO | PARAMETERS | DESCRIPTION |

|---|---|---|

| 1 | -name | Perform a case-sensitive search for “files” |

| 2 | -iname | Perform a case-insensitive search for “files” |

| 3 | size +n | Matches files of size larger than size n |

| 4 | size -n | Matches files of size smaller than size n |

| 5 | -mtime n | Matches files or directories whose contents were last modified n*24 hours ago |

| 6 | -atime n | Matches files last access n*24 hours ago |

For example, search for all case-insensitive files with the extension *mov 2 days ago

$ find $HOME -type -iname "*.mov" -mtime 2Operators

| S/NO | OPERATOR | EXPLANATION |

|---|---|---|

| 1 | -and | Match for both sides of the operators |

| 2 | -or | Match for either test of the operators |

| 3 | -note | Don’t match the test of the operators |

For example, search for all files with Hello*, but excl ude pdf and jpg

$ find \( -name "Hello*" -mtime 2 \) -and -not \( -iname "*.jpg" -or -iname "*.pdf" \)When using the () to combine tests, remember to escape the (\) brackets. You will need to leave a space after you open and close the brackets

find -type f -iname "*.mov" -exec chmod +x {} \;The first part find -type f -iname”*.mov” will not be explained….. Executed commands must end with \; (a backslash and semi-colon) and may use {} (curly braces) as a placeholder for each file that the find command locates.

References:

Checking whether the root partition has run out of inodes. Use the command. If it shows 100%, there are many small files. Perhaps, do look for some of these files at /tmp

df -i

Filesystem Inodes IUsed IFree IUse% Mounted on

/dev/mapper/centos-root 9788840 320849 9467991 4% /

devtmpfs 70101496 560 70100936 1% /dev

tmpfs 70105725 8 70105717 1% /dev/shm

tmpfs 70105725 1581 70104144 1% /run

.....

.....You may want to check which directories is using the most space with the commands below

% du -hx -d 1 |sort -h

1.3M ./Espresso-BEEF

4.9M ./NB07

8.3M ./Gaussian2

31M ./Gaussian

65M ./MATLAB

478M ./Abaqus

647M ./pytorch-GAN

10G ./COMSOL

12G .-h argument produces the human-readable output

-x restricts the search to the current directory

-d 1 is the summary for each directory

sort -h produces human-readable output and the directories with the largest usage will appear at the bottom of the list.

Sometimes, you are a non-root user and you wish to change shell and you have an error

$ chsh -s /bin/tcsh

chsh you (user xxxxxxxxx) don't existThis error occurs when the userID and Passowrd is using LDAP or Active Directory so there is no local account in the /etc/passwd where it first looks to. I used Centrify where we can configure the Default Shell Environment on AD. But there is a simple workaround if you do not want to bother your system administrator

First check that you have install tcsh. I have it!

$ chsh -l

/bin/bash

/bin/cdax/bash

/bin/cdax/csh

/bin/cdax/ksh

/bin/cdax/rksh

/bin/cdax/sh

/bin/cdax/tcsh

/bin/csh

/bin/ksh

/bin/rksh

/bin/sh

/bin/tcsh

/sbin/nologin

/usr/bin/bash

/usr/bin/cdax/bash

/usr/bin/cdax/dzsh

/usr/bin/cdax/sh

/usr/bin/dzsh

/usr/bin/sh

/usr/sbin/nologin

/usr/bin/tmux

Next Step: Check your current shell

$ echo "$SHELL"

/bin/bashStep 3: Write a simple .profile file

$ vim ~/.profileif [ "$SHELL" != "/bin/tcsh" ]

then

export SHELL="/bin/tcsh"

exec /bin/tcsh -l # -l: login shell again

fi

Step 4: In your .bashrc, just add the “source ~/.profile”

# .bashrc

# Source global definitions

if [ -f /etc/bashrc ]; then

. /etc/bashrc

fi

source ~/.profile

Source the .bashrc again

$ source ~/.bashrc