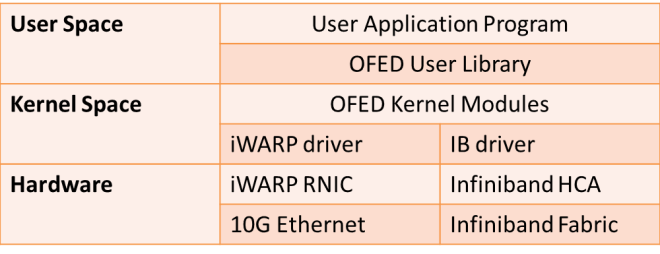

Remote Direct Access Memory Access (RDMA) allows data to be transferred over a network from the memory of one computer to the memory of another computer without CPU intervention. There are 2 types of RDMA hardware: Infiniband and RDMA over IP (iWARP). OpenFabrics Enterprise Distribution (OFED) stack provides common interface to both types of RDMA hardware.

High Bandwidth Switches like 10G allows high transfer rates, but TCP/IP is not sufficient to make use of the entire 10G bandwidth due to data copying, packet processing and interrupt handling on the CPUs at each end of the TCP/IP connection. In a traditional TCP/IP network stack, an interrupt occurs for every packet sent or received, data is copied at least once in each host computer’s memory (between user space and the kernel’s TCP/IP buffers). The CPU is responsible for processing multiple nested packet headers for all protocols levels in all incoming and outgoing packets.

Cards with iWARP and TCP Offloading (TOC) capbilities like Chelsio enables to the entire iWARP, TCP/IP and IP Protocol to offlload from the main CPU on to the iWARP/TOE Card to achieve throuput close to full capacity of 10G Ethernet.

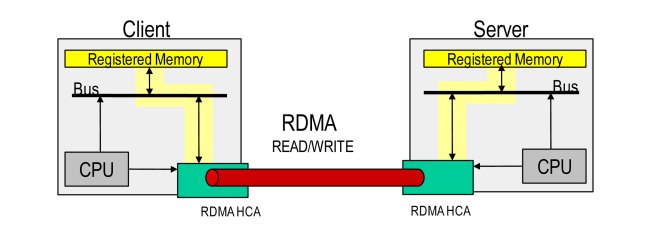

RDMA based communication

(Taken from TCP Bypass Overview by Informix Solution (June 2011) Pg 11)

- Remove from CPU from being bottleneck by using User Space to User Space remote copy – after memory registration

- HCA is responsible for virtual-physcial -> physial-virtual address mapping

- Shared keys and exchanged for access rights and current ownership

- Memory has to be registered to lock into RAM and initalise HCA TLB

- RDMA read uses no CPU cycles after registratio on doner side.