If you encountered errors like “error registering openib memory” similar to what is written below. You may want to take a look at the OpenMPI FAQ – I’m getting errors about “error registering openib memory”; what do I do? .

WARNING: It appears that your OpenFabrics subsystem is configured to only

allow registering part of your physical memory. This can cause MPI jobs to

run with erratic performance, hang, and/or crash.

This may be caused by your OpenFabrics vendor limiting the amount of

physical memory that can be registered. You should investigate the

relevant Linux kernel module parameters that control how much physical

memory can be registered, and increase them to allow registering all

physical memory on your machine.

See this Open MPI FAQ item for more information on these Linux kernel module

parameters:

http://www.open-mpi.org/faq/?category=openfabrics#ib-locked-pages

Local host: node02

Registerable memory: 32768 MiB

Total memory: 65476 MiB

Your MPI job will continue, but may be behave poorly and/or hang.

The explanation solution can be found at How to increase MTT Size in Mellanox HCA.

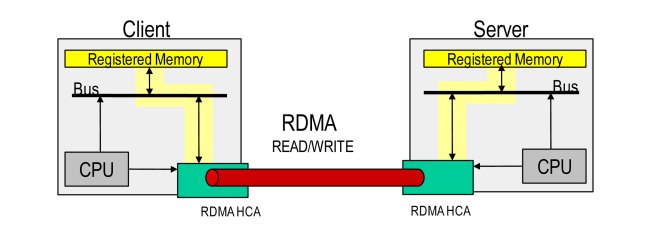

In summary, the error occurred when applications which consumed a large amount of memory, application might fail when not enough memory can be registered with RDMA. There is a need to increase MTT size. But increasing MTT size hasve the downside of increasing the number of “cache misses” and increases latency.

1. To check your value of log_num_mtt

# cat /sys/module/mlx4_core/parameters/log_num_mtt

2. To check your value of log_mtts_per_seg

# cat /sys/module/mlx4_core/parameters/log_mtts_per_seg

There are 2 parameters that affect registered memory. This can be taken from http://www.open-mpi.org/faq/?category=openfabrics#ib-low-reg-mem

With Mellanox hardware, two parameters are provided to control the size of this table:

- log_num_mtt (on some older Mellanox hardware, the parameter may be

num_mtt, notlog_num_mtt): number of memory translation tables - log_mtts_per_seg:

The amount of memory that can be registered is calculated using this formula:

In newer hardware:

max_reg_mem = (2^log_num_mtt) * (2^log_mtts_per_seg) * PAGE_SIZE

In older hardware:

max_reg_mem = num_mtt * (2^log_mtts_per_seg) * PAGE_SIZE

For example if your server’s Physical Memory is 64GB RAM. You will need to registered 2 times the Memory (2x64GB=128GB) for the max_reg_mem. You will also need to get the PAGE_SIZE (See Virtual Memory PAGESIZE on CentOS)

max_reg_mem = (2^ log_num_mtt) * (2^3) * (4 kB) 128GB = (2^ log_num_mtt) * (2^3) * (4 kB) 2^37 = (2^ log_num_mtt) * (2^3) * (2^12) 2^22 = (2^ log_num_mtt) 22 = log_num_mtt

The setting is found in /etc/modprobe.d/mlx4_mtt.conf for every nodes.

References: