What is SLAW?

SLAW is a scalable, containerized workflow untargeted LC-MS processing. It was developed by Alexis Delabriere in the Zamboni Lab at ETH Zurich. An explanation of the advantages of SLAW and its motivations of development can be found in this blog post. In brief, the core advantages of SLAW are:

Getting the Test Data from the Source Code

You may want to download the SLAW Source Code which contains some test data you can test for your SLAW Container.

% git clone https://github.com/zamboni-lab/SLAW.gitLet make a new directory my_SLAW and copy the test_data out. I’m assuming you have Singularity Installed. Compiling Singularity-CE-3.9.2 on CentOS-7.

Create the output folder and unzip the MzmL.zip

% cp SLAW/test_data ~my_SLAW

% cd ~my_SLAW/test_data

% mkdir output

% unzip mzML.zip

% singularity pull slaw.sif docker://zambonilab/slaw:latestTest Runing. Just a few things to note. Try to use the absolute PATH for PATH_OUTPUT and MZML_Folder

% singularity run -C -W . -B PATH_OUTPUT:/output -B MZML_FOLDER:/input slaw.sifFor example,

% singularity run -C -W . -B /home/user1/my_SLAW/output:/output -B /home/user1/my_SLAW/mzmL:/input slaw.sif

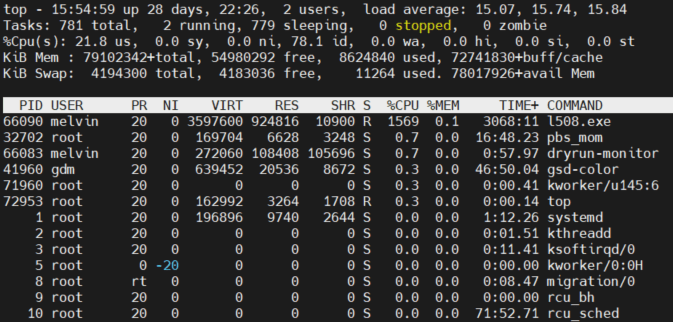

2022-02-25|00:46:52|INFO: Total memory available: 53026 and 32 cores. The workfl

2022-02-25|00:46:52|INFO: Guessing polarity from file:DDA1.mzML

2022-02-25|00:46:53|INFO: Polarity detected: positive

2022-02-25|00:46:54|INFO: STEP: initialisation TOTAL_TIME:2.41s LAST_STEP:2.41s

2022-02-25|00:46:55|INFO: 0 peakpicking added

2022-02-25|00:46:59|INFO: MS2 extraction finished

2022-02-25|00:46:59|INFO: Starting peaktable filtration

2022-02-25|00:46:59|INFO: Done peaktables filtration

2022-02-25|00:46:59|INFO: STEP: peakpicking TOTAL_TIME:7.57s LAST_STEP:5.16s

2022-02-25|00:46:59|INFO: Alignment finished

2022-02-25|00:46:59|INFO: STEP: alignment TOTAL_TIME:7.60s LAST_STEP:0.03s

2022-02-25|00:47:10|INFO: Gap filling and isotopic pattern extraction finished.

2022-02-25|00:47:10|INFO: STEP: gap-filling TOTAL_TIME:18.01s LAST_STEP:10.41s

2022-02-25|00:47:10|INFO: Annotation finished

2022-02-25|00:47:10|INFO: STEP: annotation TOTAL_TIME:18.04s LAST_STEP:0.03s

2022-02-25|00:47:10|INFO: Processing finished.