This article is taken from Intel “Efficient Heterogeneous Parallel Programming Using OpenMP”. In this article, we will show you how to do CPU+GPU asynchronous calculations using OpenMP.

In some cases, offloading computations to an accelerator like a GPU means that the host CPU sits idle until the offloaded computations are finished. However, using the CPU and GPU resources simultaneously can improve the performance of an application. In OpenMP® programs that take advantage of heterogenous parallelism, the master clause can be used to exploit simultaneous CPU and GPU execution. In this article, we will show you how to do CPU+GPU asynchronous calculation using OpenMP.

…..

…..

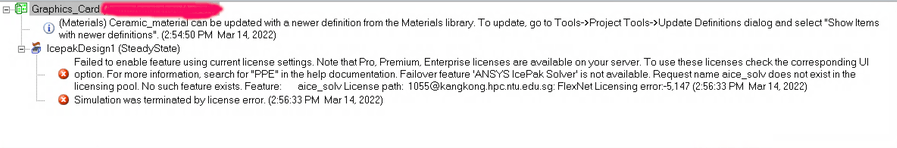

…..The Intel® oneAPI DPC++/C++ Compiler was used with following command-line options:

‑O3 ‑Ofast ‑xCORE‑AVX512 ‑mprefer‑vector‑width=512 ‑ffast‑math ‑qopt‑multiple‑gather‑scatter‑by‑shuffles ‑fimf‑precision=low

‑fiopenmp ‑fopenmp‑targets=spir64=”‑fp‑model=precise”…..

Intel: Efficient Heterogeneous Parallel Programming Using OpenMP (Best Practices to Keep the CPU and GPU Working at the Same Time)

…..

…..

OpenMP provides true asynchronous, heterogeneous execution on CPU+GPU systems. It’s clear from our timing results and VTune profiles that keeping the CPU and GPU busy in the OpenMP parallel region gives the best performance. We encourage you to try this approach.