To test whether you have compiled your GROMACS correctly with the CUDA drivers and runtime. You can use the command

% gmx_mpi --version

You should see

GPU support: CUDA ..... ..... CUDA driver: 10.10 CUDA runtime: 10.10

If you are compiling Gromacs like Compiling Gromacs-2019.3 with Intel MKL and CUDA and encounter errors similar to the ones below:

Downloading: http://gerrit.gromacs.org/download/regressiontests-2020.3.tar.gz -- [download 100% complete] CMake Error at tests/CMakeLists.txt:57 (message): error: downloading 'http://gerrit.gromacs.org/download/regressiontests-2020.3.tar.gz' failed status_code: 7 status_string: "Couldn't connect to server" log: Trying 130.237.25.133... TCP_NODELAY set Connected to gerrit.gromacs.org (130.237.25.133) port 80 (#0) GET /download/regressiontests-2020.3.tar.gz HTTP/1.1 Host: gerrit.gromacs.org User-Agent: curl/7.54.1 Accept: */* HTTP/1.1 302 Found Date: Mon, 28 Dec 2020 02:26:13 GMT Server: Apache/2.4.18 (Ubuntu) Location: ftp://ftp.gromacs.org/regressiontests/regressiontests-2020.3.tar.gz Content-Length: 335 Content- Type: text/html; charset=iso-8859-1 Ignoring the response-body [335 bytes data] Connection #0 to host gerrit.gromacs.org left intact Issue another request to this URL: 'ftp://ftp.gromacs.org/regressiontests/regressiontests-2020.3.tar.gz' Trying 130.237.11.165... TCP_NODELAY set Connected to ftp.gromacs.org (130.237.11.165) port 21 (#1) 220 Welcome to the GROMACS FTP service. USER anonymous 331 Please specify the password. PASS ftp@example.com 230 Login successful. PWD 257 "/" is the current directory Entry path is '/' CWD regressiontests ftp_perform ends with SECONDARY: 0 250 Directory successfully changed. ..... ..... Connecting to 130.237.11.165 (130.237.11.165) port 10087 connect to 130.237.11.165 port 21 failed: Connection timed out Failed to connect to ftp.gromacs.org port 21: Connection timed out Closing connection 1

Step 1: Download the Relevant Test from https://ftp.gromacs.org/regressiontests/

Step 2: Untar the regression test to the Gromacs Source Directory

% tar -zxvf egressiontests-2019.6.tar.gz

Step 3: Create a script file

% touch gromacs_gpgpu.sh

Include -DREGRESSIONTEST_DOWNLOAD=OFF -DREGRESSIONTEST_PATH=../regressiontests-2019.6

To see the whole makefile, see

CC=mpicc CXX=mpicxx cmake .. -DCMAKE_C_COMPILER=mpicc -DCMAKE_CXX_COMPILER=mpicxx -DGMX_MPI=on -DGMX_FFT_LIBRARY=mkl \ -DCMAKE_INSTALL_PREFIX=/usr/local/gromacs-2019.3_intel18_mkl_cuda11.1 -DREGRESSIONTEST_DOWNLOAD=OFF -DREGRESSIONTEST_PATH=../regressiontests-2019.6 \ -DCMAKE_C_FLAGS:STRING="-cc=icc -O3 -xHost -ip" \ -DCMAKE_CXX_FLAGS:STRING="-cxx=icpc -O3 -xHost -ip -I/usr/local/intel/2018u3/compilers_and_libraries_2018.3.222/linux/mpi/intel64/include/" \ -DGMX_GPU=on \ -DCUDA_TOOLKIT_ROOT_DIR=/usr/local/cuda-10.1 \ -DCMAKE_BUILD_TYPE=Release \ -DCUDA_HOST_COMPILER:FILEPATH=/usr/local/intel/2018u3/compilers_and_libraries_2018.3.222/linux/bin/intel64/icpc

If you feel that your font size is too small, you can change the font size by issuing the command

% ssh -X userid@remoteserver

If you are using xfce4, you can xfc4-settings-manager to change the font size settings by typing on the command line

% xfce4-settings-manager

You will see something like this. Click “Settings Others” under Others Category

Look for xsettings > “Sans 12”

Interesting Solution from Panduit to manage Cables neatly.

The CentOS project recently announced a shift in strategy for CentOS.

Where do we go from here? We can look at Rocky Linux. Rocky Linux aims to function as a downstream build as CentOS had done previously, building releases after they have been added by the upstream vendor, not before. Rocky Linux is led by Gregory Kurtzer, founder of the CentOS project.

Step 1: Logged in to your RMC

Step 2: Check the state of the system. To view the partition configuration, enter show npar.

RMC cli> show npar pnum=0 RMC cli> show complex

If no errors are indicated, then proceed to power up the system.

If there are errors, run

RMC cli>show logs error

and resolve the errors before powering up the system.

Step 3: To power up the system, assuming it is configured with all chassis in one large nPartition numbered 0

RMC cli> power on npar pnum=0

References:

Step 1: Power down the SuperDome Flex Server via OS

% shutdown -h now

Step 2: Log into the RMC as the administrator user, and enter the following command to power OFF the system:

RMC cli> power off npar pnum=0

To power RMC OFF a single partition, enter the following command:

RMC cli> power off npar pnum=x

Where x=nPar number.

If the above command is not workable, you may have to force power off

RMC cli> power off npar pnum=0 force

Step 3: Enter the following command, and verify that the Run State is OFF:

RMC cli> show npar

Partitions: 1 Par Run Status Chassis HT RAS CPUs Memory (GB) IO Cards Boot Boot Boot Num State OK/In OK/In In/OK OK/In Chassis Slots ===== ========== ========== ======= === === ========= ============== ========= ======== ============ p0 Off OK 1/1 off on 4/4 256/256 0/0 r001i01b 3,5 * OK/In = OK/Installed

References:

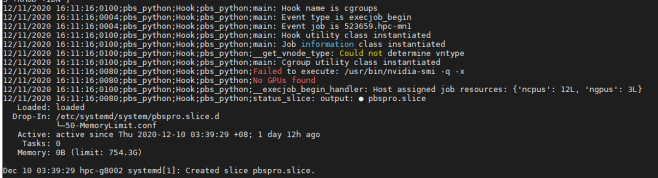

Step 1: Proceed to the Head Node (Scheduler)

Once you have the Job ID you wish to investigate, go to the Head Node and do. The “-n” is the number of days to search logs at

% tracejob -n 10 jobID

From the tracejob, you will be able to take a peek which node the job landed. Next you can go the node in question and find information from the mom_logs

% vim /var/spool/pbs/mom_logs/thedateyouarelookingatFor example,

% vim /var/spool/pbs/mom_logs/20201211Using Vim, search for the Job ID

? yourjobIDYou should be able to get a good hint of what has happened. In my case is that my nvidia drivers are having issues.