This blog entry is summarise from the excellent article “Achieving Breakthrough MPI Performance with Fabric Collectives Offload” by Voltaire. This is also a continuation of the article “Performance Penalty for MPI Communication”

A. Fabric-based collective offload solution.

There are 3 principles

- Network Offload –

Offloading floating point computation from the server CPU to the network switch. The collective operations can be easily handled by the switch CPU and its cache - Topology-aware orchestration –

The Fabric Subnet Manager (SM) which has complete fabric knowledge of the fabric physical topology and ensure that the collective logical-tree optimise the collective communication accordingly - Communication isolation –

Collective communication is isolated from the rest of the rest of the fabric by making use of VLAN

Adapter-based Offload collective offload

- The Adapter-based Offload approach delegates collective communication, management and progress as well as computation if need to the Host Channel Adapter (HCA). This will addresses the issues of OS noise shielding, but cannot be expected to improve the entire set of collective inefficiencies such as fabric congestion and topology. From the article, there are scalability issues with this approach as the size of the job increases, the number of HCA resources used. This in turn will increase memory consumption and cache missing, resulting in added latency for the collective operation.

Voltaire Solution

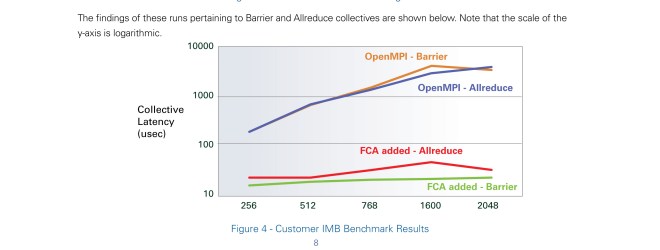

Voltaire uses the Fabric-based collective offload approach called Fabric Colective Accelerator (FCA) software. The solution is composed of a manager that orchestrates the initalisation of the collective communication tree and MPI Library that offloads the computation onto the Voltaire switch CPU. For more details, do look at “Achieving Breakthrough MPI Performance with Fabric Collectives Offload” you will find very useful graphs and details on this solution.

PDF Document: Achieving Breakthrough MPI Performance with Fabric Collectives Offload by Voltaire