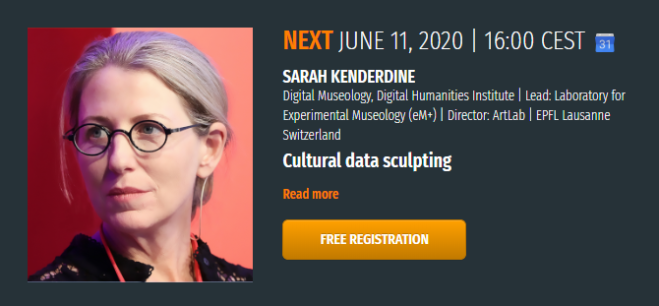

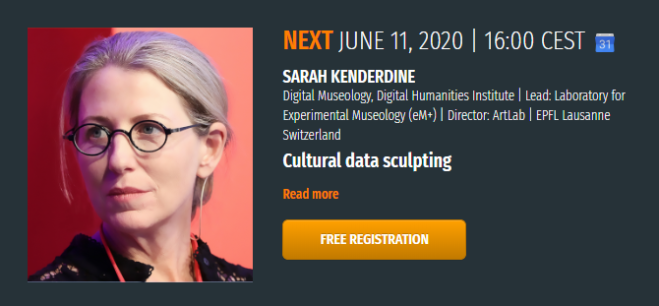

SPEAKER: Sarah Kenderdine | Digital Museology, Digital Humanities Institute |

Lead: Laboratory for Experimental Museology (eM+) | Director: ArtLab | EPFL Lausanne Switzerland

TITLE: Cultural data sculpting

DATE&TIME: Thursday, June 11, 2020 at 4:00 PM CEST

ABSTRACT:

In 1889 the curator G. B. Goode of the Smithsonian Institute delivered an anticipatory lecture entitled ‘The Future of the Museum’ in which he said this future museum would stand side by side with the library and the laboratory.’

Convergence in collecting organisations propelled by the liquidity of digital data now sees them reconciled as information providers in a networked world.

The media theorist Lev Manovich described this world-order as “database logic,” whereby users transform the physical assets of cultural organisations into digital assets to be—uploaded, downloaded, visualized, shared, users who treat institutions not as storehouses of physical objects, but rather as datasets to be manipulated. This presentation explores how such a mechanistic description can replaced by ways in which computation has become ‘experiential, spatial and materialized; embedded and embodied’. It was at the birth of the Information Age in the 1950s that the prominent designer Gyorgy Kepes of MIT said “information abundance” should be a “landscapes of the senses” that organizes both perception and practice. “This ‘felt order’ he said should be “a source of beauty, data transformed from its measured quantities and recreated as sensed forms exhibiting properties of harmony, rhythm and proportion.”

Archives call for the creation of new prosthetic architectures for the production and sharing of archival resources. At the intersection of immersive visualisation technologies, visual analytics, aesthetics and cultural (big) data, this presentation explores diverse digital cultural heritage experiences of diverse archives from scientific, artistic and humanistic perspectives.

Exploiting a series of experimental and embodied platforms, the discussion argues for a reformulation of engagement with digital archives at the intersection of the tangible and intangible and as a convergence across domains. The performative interfaces and repertoires described demonstrate opportunities to reformulate narrative in a digital context and they ways they support personal affective engagement with cultural memory.